By Dr. Jasmin (Bey) Cowin

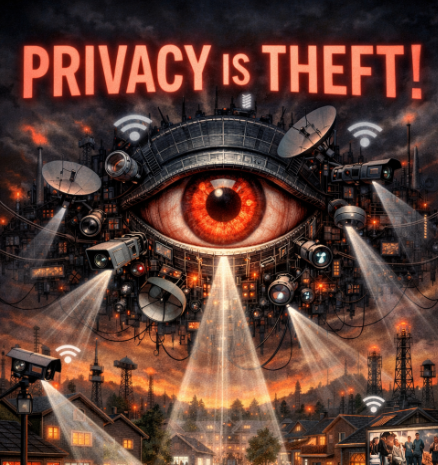

Secrets are lies. Sharing is caring. Privacy is theft.” (Eggers, 2013, p. 305)

This epigraph from Dave Eggers’ The Circle captures the mood in the courtroom of Santa Fe, New Mexico, where the New Mexico Attorney General Raul Torrez is mounting a landmark case against Meta Platforms, Inc. In Eggers’ dystopian world, these phrases represent the ideological backbone of a society that sacrifices privacy and accountability in the name of progress. In the real world, they serve as a lens through which to view the systemic failures and deliberate design choices that New Mexico alleges have allowed platforms like Facebook and Instagram to perpetuate harm. The case, State of New Mexico v. Meta, represents the first state trial to challenge Meta for exposing children to sexual exploitation and mental health harms while allegedly misrepresenting the safety of its platforms. New Mexico’s strategy pivots away from the protections of Section 230 of the Communications Decency Act, which shields platforms from liability for third-party content. Instead, the state argues that Meta’s algorithms are defective products that actively enable harm. This approach reframes the conversation: Meta is not merely a publisher of user content, but the creator of tools that amplify and encourage dangerous behavior.

Algorithms on Trial: The Case Against Meta

New Mexico Attorney General Raúl Torrez has built a case that focuses on Meta’s algorithms as central to the harm caused. At the heart of this case is evidence gathered through “Operation MetaPhile,” an undercover investigation that exposed how Meta’s platforms operate in real time. Investigators created fictitious child accounts, including that of a 13-year-old girl named “Issa Bee.” Her posts were innocent, simple updates about school lunches and losing her last baby tooth. Within weeks, her account attracted over 6,700 followers, nearly all of them adult men from countries such as Nigeria, Ghana, and the Dominican Republic. Rather than identifying this as a red flag, Meta’s platform encouraged “Issa Bee” to monetize her account by transitioning to a professional profile. Investigators documented how Meta’s algorithms funneled minor accounts into exploitative networks and recommended harmful content, including groups promoting eating disorders, self-harm, and child sexual abuse material (CSAM). This evidence challenges Meta’s public claims of robust safeguards and commitment to user safety. The state’s argument draws parallels to Mercer’s critique of technology in The Circle: “The tools you guys create actually manufacture unnaturally extreme social needs. No one needs the level of contact you’re purveying. It improves nothing. It’s not nourishing. It’s like snack food… Endless empty calories, but the digital-social equivalent.” (Eggers, 2013, p. 134).

New Mexico alleges that Meta’s platforms are engineered to be addictive, exploiting vulnerabilities in users, especially children, to maximize engagement. The state also highlights the gap between Meta’s internal research and its public messaging. One key piece of evidence is Meta’s internal “BEEF” study, which found that 51% of Instagram users reported harmful experiences within just seven days. This starkly contrasts with Meta’s public claims of safety, which the state argues were knowingly exaggerated to maintain its user base and revenue.

Meta’s Defense: Transparency or Deception?

Meta’s defense rests on the premise of transparency. The company argues that by disclosing the imperfections of its safeguards, it has fulfilled its duty to inform users. This argument hinges on the idea that transparency absolves the platform of responsibility for the harm caused by its design. New Mexico, however, counters that this defense is little more than a façade. The state argues that Meta’s disclosures were incomplete and misleading, masking the true extent of the risks posed by its platforms. Investigators documented numerous instances where Meta failed to address explicit harm despite being aware of it. For example, when minor accounts reported being added to groups promoting CSAM, Meta’s response was to suggest that the users leave the groups rather than remove the harmful content. The state’s legal team frames this as a deliberate choice: Meta prioritized engagement metrics over user safety, even when it knew the consequences.

A New Legal Playbook

What makes this case particularly significant is its innovative evidentiary approach. New Mexico’s legal team imported law enforcement methods into civil litigation, using real-time undercover documentation to expose the pathways through which Meta’s algorithms facilitate harm. This shift represents a move away from reliance on internal company documents or whistleblower testimony and toward direct evidence of user experience. This strategy could serve as a blueprint for future lawsuits targeting tech companies. By treating algorithms as products subject to liability, New Mexico is challenging the foundational protections that have shielded platforms like Meta from accountability. Legal scholar Kevin Ofchus (2023) supports this approach, arguing that “algorithms may materially contribute to, substantially assist, and even encourage what creates liability in certain conduct.” He advocates for treating algorithms as products, subject to the same liability standards as physical goods. If New Mexico’s strategy succeeds, it could open the floodgates for similar cases across the United States. The trial also raises important questions about the ethical responsibilities of tech companies in an age where algorithms shape nearly every aspect of our lives.

A Reckoning in the Digital Age

Like the utopian vision of The Circle, Meta’s platforms were built on the promise of connection and empowerment. But as this trial reveals, that promise has come at a cost. Behind the algorithms that drive these platforms lies a system that prioritizes profit over safety, engagement over well-being. Eggers’ words serve as a cautionary tale: “Privacy is theft.” (Eggers, 2013, p. 305). The trial in Santa Fe forces us to confront the uncomfortable truth that these platforms are not neutral tools. They are active participants in shaping behavior, amplifying harm, and eroding the very privacy they claim to protect. New Mexico’s case against Meta is more than a legal battle; it is a call to action. It challenges us to demand accountability from the systems that govern our digital lives and to reconsider the cost of a world where algorithms dictate our choices. The verdict in this case is weeks away, but its implications will likely resonate for years to come. If New Mexico prevails, it could mark the beginning of a new era of accountability for tech companies. If Meta succeeds in its defense, it will reinforce the status quo, leaving the question of responsibility for digital harm unanswered. For now, the courtroom in Santa Fe stands as a battleground for one of the most pressing debates of our time.

References

Eggers, D. (2013). The Circle. Alfred A. Knopf.

Ofchus, K. (2023). Cracking the Shield: CDA Section 230, Algorithms, and Product Liability. University of Arkansas Little Rock Law Review, 46. https://papers. ssrn. com/sol3/papers. cfm?abstract_id=4452392

This article was written by Dr. Jasmin (Bey) Cowin, Associate Professor and U. S. Department of State English Language Specialist (2024). She writes as a freelance journalist covering artificial intelligence, AI policy and regulation, global governance, blockchain, and other emerging technology developments, alongside her work on Education for 2060. Connect with her on LinkedIn to inquire about speaking, consulting, and writing services.

Image created with ChatGPT, March 9th, 2026