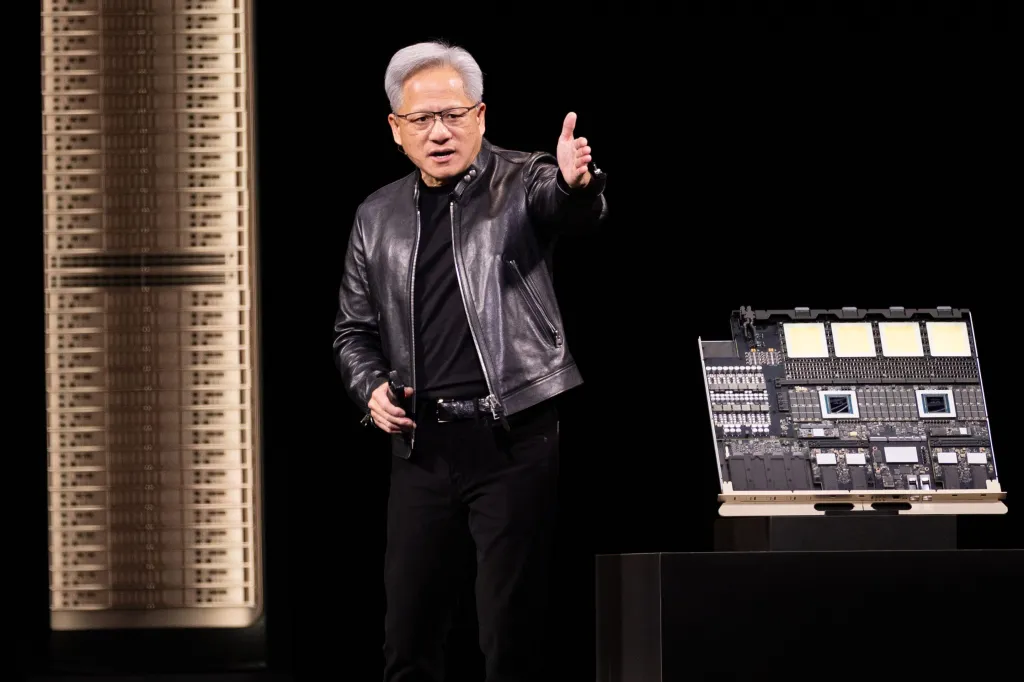

Jensen Huang, clad in his signature leather jacket, addressed nearly 20,000 attendees at Nvidia’s GTC conference in San Jose yesterday, a gathering often dubbed the Super Bowl of AI. He projected a staggering $1 trillion in orders for Nvidia’s advanced AI chips by 2027, a figure underscored by the global surge in AI infrastructure development. Despite Nvidia’s ascent to become the world’s most valuable company, with a market capitalization hovering around $4 trillion, Huang has largely sidestepped the public criticism often directed at other prominent AI figures.

Social media platforms frequently host sharp critiques of executives like OpenAI CEO Sam Altman, with some posts labeling him as “evil.” Companies such as Anthropic, Meta, and Google routinely face scrutiny over the broader societal implications of artificial intelligence, ranging from potential job displacement and copyright disputes to the spread of misinformation and the increasing integration of AI into military applications. In stark contrast, Nvidia’s leader is frequently celebrated as the visionary engineer driving this technological boom. This perception persists even as massive AI data centers, filled with Nvidia’s chips, spark local opposition in communities across the country.

Indeed, nearly every significant advancement in AI, from conversational agents and chatbots to workplace applications and military systems, relies on Nvidia’s integrated hardware, software, and systems. The company has also strategically invested billions into the AI ecosystem, forming partnerships with key players like OpenAI and Anthropic, while also funding data center operators and emerging AI startups. This deep embedding across the entire AI landscape raises a pertinent question: why has Huang, and by extension Nvidia, remained largely immune to the AI backlash?

The historical parallel often drawn is that suppliers of foundational components during technological revolutions rarely attract the same level of public scrutiny as those who directly deploy the end products. During the era of fossil fuels, criticism largely focused on oil companies, not the manufacturers of drilling equipment. Similarly, the railroad barons faced public ire, while the steel rail producers remained largely unmolested. In the early internet age, cloud providers such as Amazon Web Services enabled the rise of disruptive companies like Airbnb and Uber; yet, the criticism predominantly targeted the platforms themselves, not the underlying infrastructure providers. Nvidia currently benefits from this historical insulation as a “picks and shovels” provider.

However, Nvidia’s recent pronouncements at GTC suggest a strategic shift beyond its traditional role as merely a chipmaker. The company is increasingly positioning itself as a comprehensive provider of entire AI computing systems, particularly for the new “inference” phase of AI. This phase, focused on powering AI outputs rather than just initial training, demands another colossal wave of infrastructure investment. Nvidia’s ambition now extends to controlling the complete stack of systems, software, and platforms that underpin the AI economy.

A central announcement during Huang’s keynote was the introduction of the Vera Rubin platform. This new offering integrates multiple chips and system components designed specifically to run large AI models and “agentic AI” systems. The platform incorporates seven new chips and several rack-scale systems, engineered to support extremely large AI clusters comprising hundreds of thousands of GPUs. Nvidia also unveiled NemoClaw, an open-source platform enabling enterprises to build AI agents, connect them to corporate data, and deploy them on Nvidia hardware.

The company’s aggressive investment strategy continues unabated across the AI ecosystem, with billions poured into dozens of AI startups over the past year. Recent investments include $2 billion into AI cloud company Nebius and backing for former OpenAI CTO Mira Murati’s new venture, Thinking Machines, which plans to deploy over 1 gigawatt of Nvidia-powered compute capacity. Nvidia is also deepening its involvement in autonomous vehicles, with its chips and software platforms gaining traction among car manufacturers developing self-driving systems. Huang articulated his vision of AI’s “five-layer cake” at GTC, positing that the AI economy relies on the synchronized scaling of energy, chips, infrastructure, models, and applications. Nvidia, strategically, occupies a central position within this stack, connecting many of these critical layers.

While Nvidia has thus far enjoyed the relative anonymity afforded to a picks-and-shovels supplier, its deepening foray into providing comprehensive AI systems and the proliferation of its hardware in the burgeoning AI data centers could alter this dynamic. As the company expands its control over the very systems powering the AI revolution, it may find itself increasingly exposed to the complex public discourse surrounding AI’s far-reaching consequences.